Overview

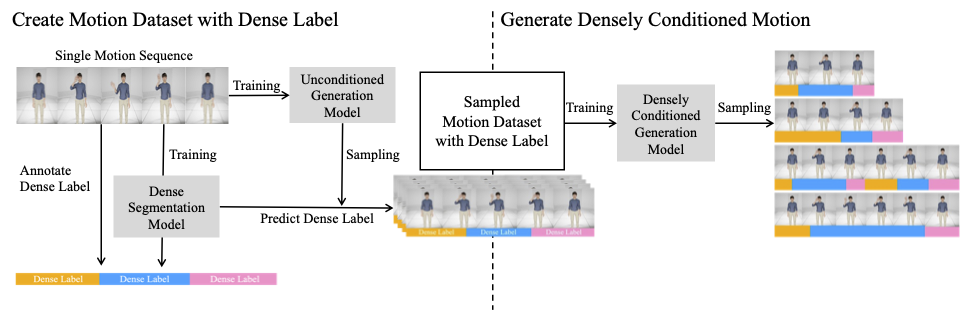

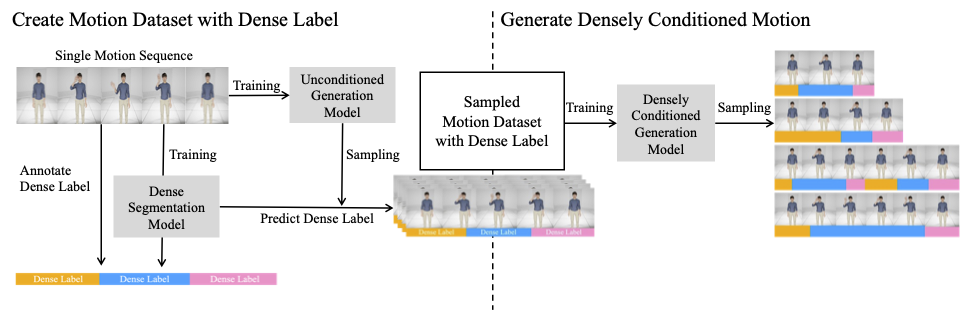

Our Pipeline Consists of Two Parts:

Create Motion Dataset with Dense Labels and Generate Densely Conditioned Motion.

Motion synthesis research has long been constrained by data scarcity, limiting the diversity and controllability of generated motions. We address this challenge with a novel approach for generating diverse and controllable motion sequences from a single annotated sample. Our method enables the creation of variations that maintain desired actions while introducing diversity in timing and movement. Our two-stage process first expands the dataset using an unconditioned diffusion model, followed by automatic labeling of generated samples. We then employ a conditioned diffusion model with an efficient structure, allowing precise control over the timing and characteristics of each motion segment. This approach preserves semantic integrity while enabling the creation of motion sequences of any desired length, even from a single input sample. We introduce a U-Net based architecture adapted to accommodate varying sequence lengths in our diffusion model. This allows us to generate motion sequences that retain their intended meaning while incorporating necessary variations in timing and phase, enhancing the controllability of motion adjustments. Experimental results across multiple datasets, including challenging scenarios with complex motions, demonstrate the robustness of our method. Our model effectively follows conditioning and optimizes the number of generated motions necessary for constructing a comprehensive dataset. The generated sequences are both realistic and diverse, showcasing the model's ability to balance fidelity with variability while significantly reducing overfitting risks in data-scarce environments. Our contributions include a method for creating diverse, controllable motions from a single input, an efficient data expansion technique, and a flexible conditioning structure for precise motion control regardless of sequence length. Our approach is possible to use for applications in animation, gaming, and virtual reality.

Our Pipeline Consists of Two Parts:

Create Motion Dataset with Dense Labels and Generate Densely Conditioned Motion.

NCSOFT Dataset

Adjust Timing

Repeat

MIXAMO

BABEL

BibTex Code Here